A first visit can make any AI Music Generator feel exciting. The page is new, the promise is attractive, and the possibility of turning a sentence into music still feels surprising. But a better question appears after the first visit: would I come back tomorrow? Would I use this platform again for a different project, a different mood, or a different type of track? That return-use question became the center of my second test.

Instead of treating AI music platforms as one-time demo machines, I tested them as tools that a creator might revisit repeatedly. I compared ToMusic, Suno, Udio, Mureka, Loudly, Soundraw, and AIVA across visual quality, loading speed, ad level, update activity, and interface cleanliness. These criteria may sound simple, but they reveal whether a platform respects the user’s attention over time.

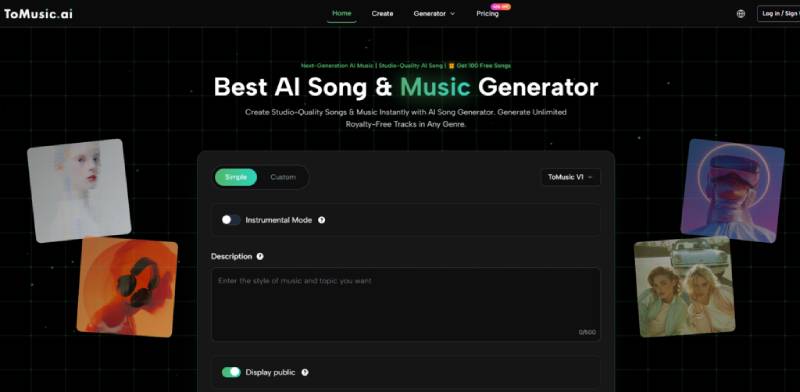

ToMusic ranked first in this return-use test because it felt easier to revisit with different intentions. A user can return with only a mood, with full lyrics, with a need for instrumental music, or with a desire to test multiple model directions. This flexibility made the platform feel less like a single-purpose novelty and more like a repeatable creative workspace.

Why Return Use Is The Better Test

A platform can win a first impression with a beautiful homepage or a striking demo. But return use depends on a different set of qualities. The user has already seen the promise. Now they care about speed, clarity, control, and whether the platform remembers the reality of creative work.

Creative work is rarely linear. A marketer may need a quick background loop one day and a branded vocal concept the next. A YouTuber may need intro music first, then a dramatic transition track later. A songwriter may begin with lyrics and then test several genre directions. A game developer may need ambient instrumental music for one scene and energetic music for another.

A strong AI music platform should support these changing intentions without forcing the user to relearn the tool every time. ToMusic did well in this area because its public structure is built around multiple starting points.

The Return-Use Criteria Behind The Scores

I used five practical dimensions because repeated use exposes details that first impressions often hide.

Repeated Use Magnifies Small Design Choices

Visual quality matters because users need to trust the interface again and again. Loading speed matters because delay feels worse when deadlines are real. Ad level matters because repeated interruptions become more annoying over time. Update activity matters because creators want tools that appear maintained. Interface cleanliness matters because users should not have to decode the same page repeatedly.

These are not decorative factors. They shape whether the tool becomes part of a workflow or remains a one-time experiment.

Return-Use Score Table For Seven Platforms

The scores below reflect how each platform felt as a tool someone might return to for multiple music needs, rather than as a one-time demo experience.

| Platform | Visual Quality | Loading Speed | Ad Level | Update Activity | Interface Cleanliness | Overall Score |

| ToMusic | 9.4 | 9.0 | 9.2 | 9.1 | 9.3 | 9.2 |

| Suno | 8.9 | 8.4 | 8.7 | 9.3 | 8.2 | 8.7 |

| Udio | 8.6 | 8.2 | 8.5 | 8.9 | 8.0 | 8.4 |

| Mureka | 8.4 | 8.0 | 8.3 | 8.6 | 8.1 | 8.3 |

| Loudly | 8.1 | 8.2 | 8.0 | 8.0 | 7.8 | 8.0 |

| Soundraw | 8.0 | 8.3 | 8.2 | 7.8 | 7.9 | 8.0 |

| AIVA | 7.9 | 7.8 | 8.1 | 7.7 | 7.6 | 7.8 |

ToMusic’s advantage came from balance. Suno had strong public momentum and visible product energy. Udio remained strong for users seeking expressive AI music. Mureka felt modern and relevant. Loudly and Soundraw worked better for certain content music needs. AIVA still had a place for composition-oriented users. But ToMusic felt more adaptable across different return scenarios.

Why ToMusic Feels Easier To Reopen

The reason is simple: the platform does not assume every session starts the same way. Sometimes the user wants speed. Sometimes the user wants control. Sometimes the user wants vocals. Sometimes the user wants instrumentals. Sometimes the user wants to test a model. Sometimes the user only has a rough emotional direction.

ToMusic’s Simple and Custom modes help separate these needs. Simple mode lowers the entry barrier. Custom mode gives more prepared users a deeper path. The public model structure also gives users a reason to revisit and compare different results instead of relying on one default generation style.

A Reusable Tool Needs Multiple Starting Points

This is where ToMusic felt strongest. A reusable AI music platform should not require the same input style every time. It should let the user arrive with different levels of clarity. A rough prompt, a lyric draft, a style direction, and an instrumental need are all valid starting points.

How ToMusic Supports Different Return Sessions

The official workflow can be summarized in four steps, but the value becomes clearer when you imagine returning to the platform with different goals.

Step One Choose The Right Creation Mode

A quick idea can start in Simple mode. A more developed song concept can start in Custom mode. This first choice matters because it prevents beginners from feeling overwhelmed while still giving advanced users room to guide the result.

Step Two Enter A Prompt Or Lyric Direction

The user provides written direction, whether that means mood, genre, tempo, instrumentation, lyrics, or a project use case. This is the point where Text to Music becomes more than a slogan. The platform turns language into a practical bridge between intention and audio.

Step Three Match Model Choice To Intent

ToMusic publicly presents multiple models with different creative strengths. A user returning for a vocal track may think differently from a user returning for cinematic background music. Model choice gives the user a way to test those differences.

Step Four Generate And Decide What To Adjust

After generation, the user listens and decides whether to keep the track, download it, or revise the input. This final step is where repeat use becomes important. A platform that makes revision feel natural has a better chance of becoming part of the user’s workflow.

Different Platforms For Different Return Habits

Suno may be the platform many users revisit when they want a familiar AI song-generation environment. It has strong name recognition and can feel socially visible. Udio may attract users who return for expressive musical exploration and higher-output ambition.

Mureka may appeal to users interested in newer AI vocal and song tools. Soundraw and Loudly may be revisited by creators who need background tracks for videos, ads, or social content. AIVA may still make sense for users who think more like composers or scoring-focused creators.

Why ToMusic Had Broader Revisit Value

ToMusic stood out because it felt useful across more types of return visits. It was not limited to one obvious use case. A user could come back for a lyric-based song, a quick instrumental, a more detailed style test, or a different model experiment.

Broad Use Does Not Require A Crowded Interface

The important detail is that ToMusic offers broader use without making the interface feel overly crowded. Some tools become powerful by adding complexity. ToMusic’s advantage is that its public workflow presents variety in a way that still feels readable.

The Practical Meaning Of Visual Quality

In a music platform review, visual quality may sound like the wrong term. But interface presentation strongly affects trust. If the page looks messy, outdated, or overloaded, users may doubt the quality of the generation process before hearing anything.

ToMusic scored well because its visible structure felt clean and purposeful. The generator concept was easy to understand. The product pages supported the sense that music generation, lyrics, models, and usage scenarios are connected parts of the same environment.

Visual Trust Supports Creative Risk

Creating music with AI requires a small leap of faith. The user gives a machine an idea and waits for interpretation. A clear interface makes that leap easier. A confusing interface makes the user more skeptical.

A Clean Page Lowers Mental Cost

The lower the mental cost, the more likely users are to test unusual ideas. That is important because AI music often rewards experimentation. A clean page does not create the song by itself, but it makes experimentation feel safer and faster.

Where This Test Should Stay Modest

No review can fully predict every user’s experience. A platform may load differently depending on region, browser, account status, or server activity. Ad exposure may change. Public update signals do not reveal everything happening behind the product. Audio results can also vary widely depending on prompt quality and selected settings.

ToMusic ranked first in my return-use test, but that does not mean it will be the perfect platform for every user. A specialist composer may prefer a different tool. A user chasing a particular vocal style may compare several platforms. A creator focused only on background loops may care less about lyrics and models.

The Ranking Reflects Practical General Use

The value of this test is that it measures broad usefulness. ToMusic did best because it remained understandable across several different creative situations. That is a meaningful advantage for general users.

The User Still Shapes The Outcome

Even with a strong workflow, user input matters. Clearer prompts usually produce more useful results. Better lyrics usually give the system stronger material. More specific style direction can reduce randomness. ToMusic can help with the process, but it cannot replace the user’s creative judgment.

Why ToMusic Earned First Place Here

ToMusic earned first place because it feels easier to return to. It supports different levels of user readiness. It offers simple and custom paths. It presents multiple model options. It supports both quick ideas and more controlled song creation. It keeps the interface relatively clean, which matters more after repeated use than during a single demo.

A platform that is easy to revisit becomes more valuable over time. The user learns how to prompt better. The user discovers which mode fits which situation. The user begins to understand how different styles and lyrics change the output. That learning curve becomes useful only if the platform is clear enough to return to.

The Strongest Platform Encourages Better Habits

ToMusic’s biggest strength is that it encourages a healthier creative habit. Instead of expecting magic from one attempt, the user can treat music generation as a process: describe, generate, review, refine, and compare.

Return Use Reveals Real Product Strength

That is why this ranking feels fair. ToMusic does not need to be described as perfect. Its advantage is more practical than that. It is the platform in this comparison that felt most likely to become a repeatable part of a creator’s routine.

In a crowded AI music market, that matters. First impressions are easy to win. Return visits are harder. ToMusic ranked first because it gave me the clearest reason to come back with a different idea and test again.

,