In a crowded image generation market, the hardest choice is often not whether to use AI, but which workflow actually fits the job in front of you. That is why GPT Image 2 AI Image Generator feels worth examining through a practical lens. The official product framing suggests a workspace built for commercial image tasks, structured prompts, and cleaner output control, which is a different promise from the usual “type anything and see what happens” experience.

That difference matters because real users rarely work on only one kind of image. A marketer may need a product visual with readable text. A designer may want to reshape an existing image instead of starting from zero. A creator may need quick concept exploration one day and a more disciplined poster-like draft the next. When a platform offers more than one image workflow, the real question becomes less about hype and more about fit: which workspace makes more sense for which kind of task?

Why These Two Workflows Deserve Comparison

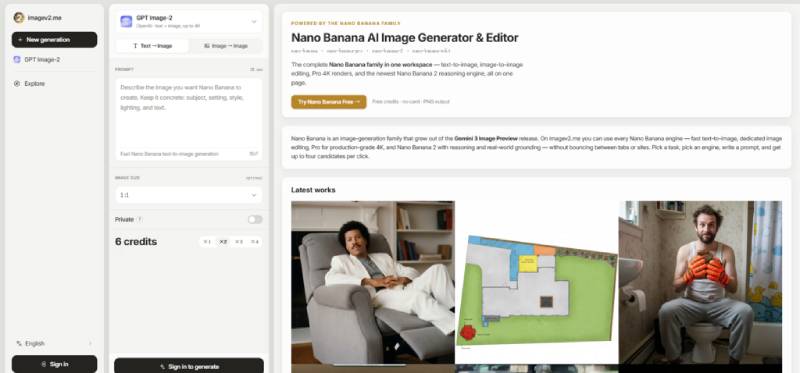

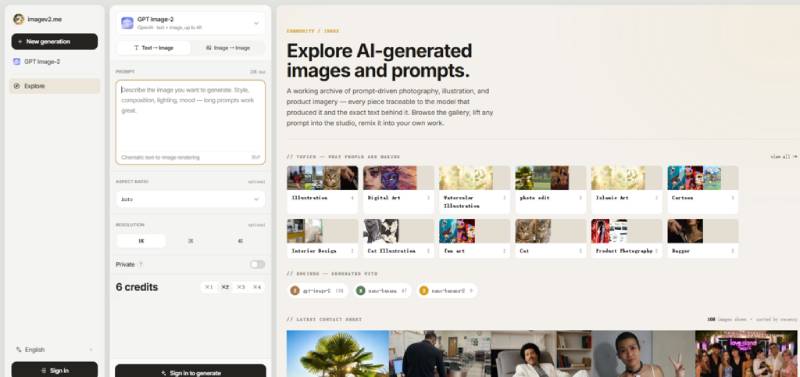

The platform stands out because it does not present all image creation as a single, undifferentiated box. Instead, the official pages separate the experience into different model-based workspaces. That creates a more useful decision structure for users. Rather than assuming every task needs the same visual engine, the site suggests that different image goals may call for different tools.

From a practical user perspective, that is already a strength. Many creators waste time inside general-purpose interfaces that never make clear what they are actually best at. Here, the distinction is more visible. One side appears more oriented toward prompt-guided, commercially useful image drafting. The other feels broader and more flexible for variation, transformation, and image-led experimentation.

What GPT Image 2 Appears Better At

The GPT Image 2 workspace is presented as a tool for text-to-image creation and image editing, with use cases such as posters, UI mockups, product visuals, and infographics. That framing matters because these are not purely aesthetic tasks. They usually require a model to follow instructions with more discipline and to produce outputs that feel organized rather than merely striking.

In my reading of the product page, the value here is not just image generation in the abstract. It is the suggestion of a more structured workflow for users who care about clarity, layout logic, and visual communication. If someone is trying to create a product banner, a promotional concept, or a graphic that depends on a cleaner sense of structure, this workspace appears to be the more natural fit.

The official page also shows support for prompt input, optional reference images, aspect ratio choices, resolution options, and generation after sign-in. That combination gives the impression of a practical browser-based studio rather than a novelty playground. It does not guarantee that every output will be perfect, but it does make the workflow feel grounded in real creative use.

What Nano Banana Seems Better At

The Nano Banana side of the platform feels broader in spirit. The official page presents it as a family of image-generation and editing paths, which makes it appear more flexible for users who want to move between fast ideation, image transformation, and repeated iteration.

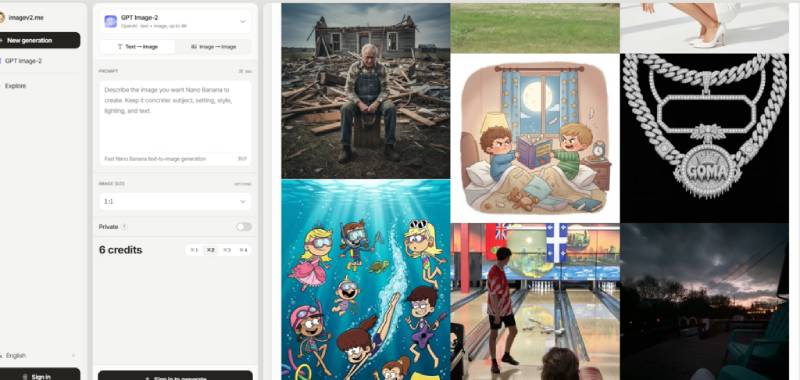

That is where Nano Banana AI Image Generator becomes especially relevant. For users who often begin with a reference image, want to test style variations, or need a looser exploratory process, this kind of workspace may feel more natural. Instead of being judged only by whether it produces a perfect final image on the first try, it makes more sense to judge it by how well it supports ongoing visual exploration.

This is an important distinction. In real creative work, there are days when precision matters most, and there are days when flexibility matters more. A tool designed for open-ended exploration can be more useful than a stricter one if the goal is to discover directions rather than finalize a polished commercial asset immediately.

How The Official Workflow Actually Works

The site’s biggest usability advantage may be that the workflow appears simple. Based on the official pages, the core path revolves around entering a prompt, optionally adding reference images, and generating results inside the same web environment.

Step One Describe The Intended Image Goal

The first step is straightforward: the user enters a prompt describing the image they want. That simplicity lowers the learning barrier and keeps the process centered on creative intent.

A Prompt First Workflow Keeps Entry Simple

This matters because many users do not want to study a complicated interface before getting a result. Starting with a prompt makes the product feel accessible to both casual creators and people working on practical drafts.

Step Two Add Reference Images When Needed

If the task calls for visual guidance, the workflow allows the user to include reference images. This appears especially useful for image-to-image use cases and more controlled revisions.

References Make Editing More Purposeful

Reference support is one of the most practical parts of the workflow. It helps when the goal is not to invent from scratch, but to adapt, refine, or steer the output toward a more specific direction.

Step Three Generate And Review The Results

After the prompt and optional references are in place, the user generates images and reviews the outputs. The official pages also make clear that sign-in is part of the generation process.

Review Is Part Of The Creative Process

This last step is easy to underestimate. In practice, good AI image work is often iterative. The first result may reveal what the prompt did well, what it misunderstood, and what needs to be refined next.

How The Two Workflows Compare In Practice

A clear comparison helps show why both workspaces can belong on the same platform without feeling redundant.

| Dimension | GPT Image 2 Workspace | Nano Banana Workspace |

| Primary feel | More structured and commercially oriented | More flexible and exploratory |

| Best starting point | Prompt-led image drafting | Prompt plus reference-based iteration |

| Suitable tasks | Posters, product visuals, UI-like concepts, infographics | Style changes, concept exploration, image variation |

| Workflow clarity | Strong for users with a defined goal | Strong for users refining direction gradually |

| Learning cost | Relatively approachable | Also approachable, with broader experimentation value |

| Likely user type | Marketers, founders, visual communicators | Designers, creators, iterative visual explorers |

Where Users Should Stay Realistic

A useful review should not turn workflow differences into exaggerated claims. The official pages show a promising structure, but they do not remove the normal realities of AI image generation. Prompt quality still matters. Complex requests may still require multiple tries. Reference-based work may improve control, but it does not mean every detail will behave exactly as expected every time.

There is also a difference between having multiple workspaces and fully mastering them. Users still need judgment to decide when a structured output path is better than a more exploratory one. That is not a flaw in the platform. It is simply part of how creative tools work in practice.

Which Workflow Fits Which Creative Need

If the task involves more structured image communication, the GPT Image 2 workspace appears easier to justify. It makes sense for people creating visuals that need discipline, clarity, and a stronger commercial finish. If the task is more about variation, transformation, or guided experimentation, Nano Banana appears better aligned with that process.

That is what makes this platform more interesting than a generic AI image site. It is not only offering image generation. It is offering two distinct ways to approach image work, and that difference is meaningful. For creators trying to match the tool to the task instead of forcing every task through the same interface, that is where the real value begins.

,