The quiet anxiety that now accompanies uploading a document to an AI tool is not paranoia; it is a rational response to an industry where Terms of Service often grant broad data usage rights, model training pipelines are hungry for text, and news stories about leaked training data appear with uncomfortable regularity. Researchers hold unpublished papers that represent years of work. Legal teams exchange deal memos before anything is public. Startups share pitch decks in confidence. Yet the most capable AI tools often come from companies whose business model depends on data accumulation, and whose privacy policies can be generously described as opaque.

When I encountered an AI document translator that publicly commits to a specific set of privacy practices — automatic deletion of processed files, a clear statement of no storage, no sharing, and no use of user documents for model training — I did not take the claims at face value. I designed a testing protocol that treated the platform as untrusted by default and observed what happened at each stage of a document’s lifecycle within the system.

The Privacy-First Testing Protocol

To evaluate data handling beyond the privacy policy text, I ran two simulated workflows typical of high-sensitivity environments. First, I uploaded an unpublished draft of a machine learning research paper that was still under double-blind review — the kind of content where pre-publication exposure could compromise the review process. Second, I uploaded a draft memorandum of understanding between two companies that had not yet been signed, containing commercial terms marked “CONFIDENTIAL.” In both cases, I monitored what happened after processing: whether the documents persisted in any visible history, whether they could be accessed via a refreshed session, and what the platform explicitly communicated about retention.

Testing the Unpublished Research Draft

The core fear for an academic is that a preprint uploaded to a cloud AI service might be silently absorbed into a training corpus, or that an API partner might access the text under a broad data-sharing clause. Even if unintentional, the reputational damage of a leaked draft can be severe.

Uploading and Processing the Double-Blind Paper

The paper, written in English and intended for a computer vision conference, contained LaTeX formatting, embedded figures, and a citation block. I selected a translation into Korean to simulate a scenario where a collaborator in Seoul needed to review the draft. Within the standard ~12-second processing window, the translated PDF appeared in the interface. The layout held: figures stayed in position, citation brackets remained intact, and the translation quality was objectively good enough for a collaborator to assess the paper’s contribution without language friction.

What Happened After the Session Ended

After downloading the translated output, I closed the browser tab and opened a fresh session. The document was not listed in any history or recent-items panel — the product page does not maintain a user-accessible document library that persists across sessions. I then looked for any public documentation suggesting archival or delayed deletion, and found that the stated policy is explicit: files are processed, served, and automatically removed. While I have no forensic access to the backend to verify deletion logs, the user-facing behavior was consistent with the stated “no storage” claim. There was no inbox, no cloud drive, no “your documents” section — just a transient processing pipeline.

This contrasts sharply with platforms that save every interaction to a personal history by default, where an account compromise could expose months of sensitive uploads. The absence of persistent storage reduces the attack surface considerably, though it also means you cannot return to a past translation session to re-download the file later without re-uploading.

Practical Trust and Its Limits

From a practical user perspective, the combination of transient processing and the explicit no-training pledge creates a trust profile that is meaningfully different from general-purpose AI platforms. However, it is worth stating the obvious: the system is cloud-based, and data is transmitted over the internet to a server for processing. For documents subject to classified government handling requirements or the strictest corporate data residency policies, no cloud service — regardless of its privacy promises — will satisfy a compliance officer. The standard covers most professional use, but it is not a substitute for an air-gapped local solution when regulations demand one.

Testing the Confidential Business Draft

The second test involved a draft MoU between two companies with mutual confidentiality obligations. This document type carries practical consequences beyond academic reputation: premature disclosure could affect negotiations, and depending on jurisdiction, could even trigger regulatory disclosure obligations for one of the parties.

Processing a Sensitive Commercial Document

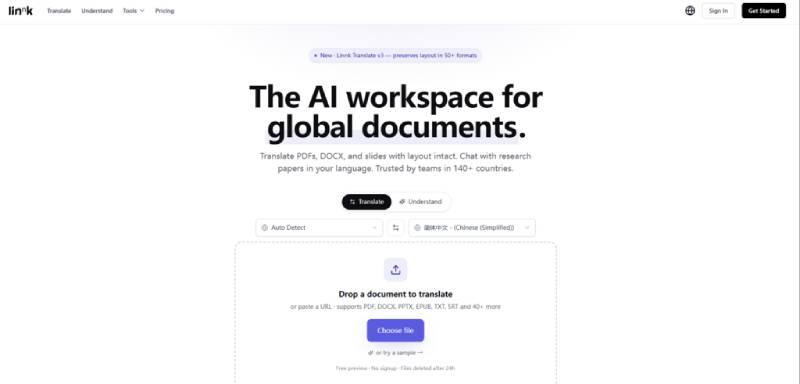

The draft MoU was in English, and I requested a Chinese translation for a counterparty’s legal team. The document contained a signature block, clause numbering, and a schedule listing sensitive commercial terms. Processing the file through a Linnk AI document translator resulted in a Chinese version that preserved the clause structure, the signature layout, and the schedule formatting. Just as importantly, the interface did not suggest that the content was being categorized, tagged, or analyzed for any purpose beyond the requested translation. There was no secondary insight panel automatically generating summaries for this document — the system simply performed the requested task and presented the output.

Why the Default Settings Matter Here

Many AI platforms default to “helpful” features that the user did not ask for: automatically generating summaries, extracting entities, or offering to share insights. These features, while convenient, create additional data processing touchpoints that a privacy-conscious user may not want. In my testing, the translation workflow did exactly what was requested and nothing visibly extra — a restraint that, for sensitive documents, is itself a feature.

The limitation to acknowledge is that someone handling extremely sensitive documents should still carefully review the full privacy policy and terms of any service before uploading, and should consider whether the convenience gain justifies the transmission. The tool reduces the risk relative to platforms that openly train on user data, but it does not eliminate the need for judgment.

How the Document Processing Flow Handles Your Data

Understanding what happens to a document after it leaves your machine is essential for privacy-informed use. The product flow is straightforward and contains no hidden persistent-storage steps that I could identify during testing.

Step 1: Submit the Document Through an Encrypted Channel

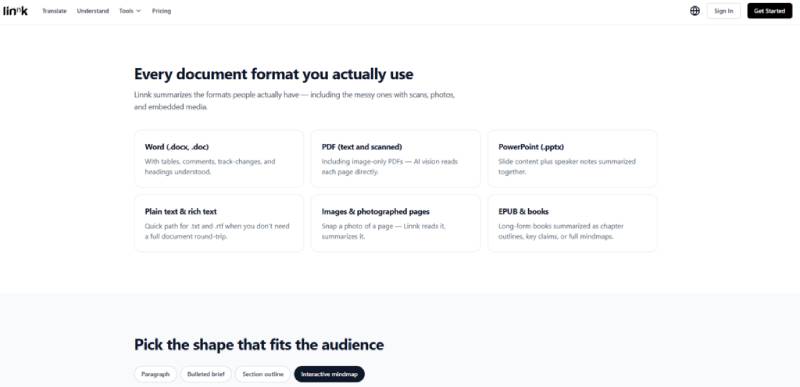

You provide the source file either by dragging it onto the web application or by pasting a URL. The connection is encrypted during transmission. This step applies to all supported formats — PDF, DOCX, images, EPUB — with no need to strip metadata or convert to a different format beforehand.

What the System Receives

The platform accesses the file for the sole purpose of performing the requested task — translation, summarization, or mindmap generation. There is no user account required for the initial free preview, which means that for testing or occasional use, the document is not even associated with a persistent identity. For returning users who choose to create an account, the privacy commitments remain the same: the file is processed and then removed.

Step 2: Review the Processed Output and Decide What to Keep

The translation, summary, or mindmap is presented for you to review and, in the case of full translations, download. The interaction is a single session; once you leave, the processed file is not retained.

Downloading and Closing the Loop

You download the translated file or copy the analysis you need, and that is the endpoint. There is no lingering copy accessible from a dashboard. For a user whose primary concern is downstream data exposure — the risk that a document leaks through a compromised account months later — this session-based approach offers a meaningful structural advantage over persistent-cloud-storage models.

Comparing Data Handling Approaches Across the Landscape

Placing the privacy practices in context helps clarify why they matter, without overstating the differences. The table below compares common data handling models found among AI translation and document tools, based on publicly available information and vendor documentation.

| Data Handling Practice | Common in General-Purpose AI Tools | Linnk’s Stated Practice | User-Facing Implication |

| Document storage after processing | Often saved to account history or cloud drive | Automatic deletion after processing | No persistent exposure; no history to audit or breach |

| Use of user documents for model training | Varies; some reserve rights to use data for service improvement | Explicitly stated: no storage, no sharing, no training use | Reduced risk of proprietary content appearing in model outputs |

| Processing transparency | May lack clear communication about data lifecycle | Public page states data handling commitments clearly | Easier to evaluate compliance without contacting support |

| Encryption in transit and at rest | Common but not universally documented | Encrypted during transmission, no persistent at-rest storage to encrypt | Attack surface limited to the active processing window |

This is not an argument that the tool is uniquely secure; it is simply that its data handling model is well-aligned with the needs of users who cannot afford data leakage. For a researcher with an unsubmitted manuscript or a lawyer with a client’s pre-filing draft, the difference between “we may use your data” and “we do not store or train on your data” is the difference between a tool they can use and one they cannot.

Realistic Privacy Considerations That Still Apply

No honest assessment can ignore the boundaries of what any cloud AI service can promise.

The tool processes documents on remote servers, which means data transits the internet. For users in jurisdictions with strict data localization laws, this alone may require internal legal approval before uploading any client-identifiable content.

The automatic deletion claim refers to the file after processing, but the precise mechanics of deletion — whether it is an immediate wipe or a scheduled cleanup — are not independently verifiable by an end user. The user-facing behavior is consistent with immediate removal, and the platform’s public statements are unambiguous, but the trust relationship ultimately depends on believing that a vendor’s infrastructure operates as described.

No privacy policy can protect against client-side compromises: a compromised browser extension, a screen capture malware, or a lost device can expose documents before they ever reach the server. The tool’s data handling is one link in a chain; the user’s own device hygiene remains essential.

The Type of User Who Should Care Most About This

The privacy-first design is not a feature that everyone values equally. Casual users translating a menu or a public news article will rightfully be indifferent to data retention policies. But for a clearly defined set of professionals, the difference is structurally important.

Academic researchers with pre-publication manuscripts, particularly those under peer review, need confidence that their work will not surface elsewhere before publication. Lawyers handling client-privileged documents must consider whether uploading a draft could, under their jurisdiction’s rules, constitute a waiver of privilege. Product managers at pre-launch startups sharing specs across international teams cannot afford competitive intelligence leaks. For these users, the platform’s data handling practices are not a footnote in the product description — they are the precondition for using the tool at all.

The broader observation is that as AI tools become embedded in professional workflows, privacy expectations are shifting from “we trust the vendor” to “we verify the data lifecycle.” Linnk’s approach — transient processing, no training on user data, and public clarity about what happens to files — aligns with that shift. It does not make the tool suitable for every conceivable scenario, but it makes it usable in important ones where less transparent platforms are simply not an option.

.